Advanced: Organizing Pipelines in Repositories & Workspaces#

In all of the examples we've seen so far, we've specified a file (-f) or a module (-m) in

order to tell the CLI tools how to load a pipeline, e.g.:

dagit -f hello_cereal.py

dagster pipeline execute -f hello_cereal.py

But most of the time, especially when working on long-running projects with other people, we will want to be able to target many pipelines at once with our tools.

Dagster provides the concept of a repository, a collection of pipelines (and other definitions) that

the Dagster tools can target as a whole. Repositories are declared using the RepositoryDefinition API as follows:

@repository

def hello_cereal_repository():

return [hello_cereal_pipeline, complex_pipeline]

This method returns a list of items, each one of which can be a pipeline, a schedule, or a partition set.

Lazy Construction#

Notice that this requires eager construction of all its member definitions. In large codebases, pipeline construction time can be large. In these cases, it may behoove you to only construct them on demand. For that, you can also return a dictionary of function references, which are constructed on demand:

@repository

def hello_cereal_repository():

# Note that we can pass a dict of functions, rather than a list of

# pipeline definitions. This allows us to construct pipelines lazily,

# if, e.g., initializing a pipeline involves any heavy compute

return {

"pipelines": {

"hello_cereal_pipeline": lambda: hello_cereal_pipeline,

"complex_pipeline": lambda: complex_pipeline,

}

}

Note that the name of the pipeline in the RepositoryDefinition must match the name we declared for

it in its pipeline (the default is the function name). Don't worry, if these names don't match,

you'll see a helpful error message.

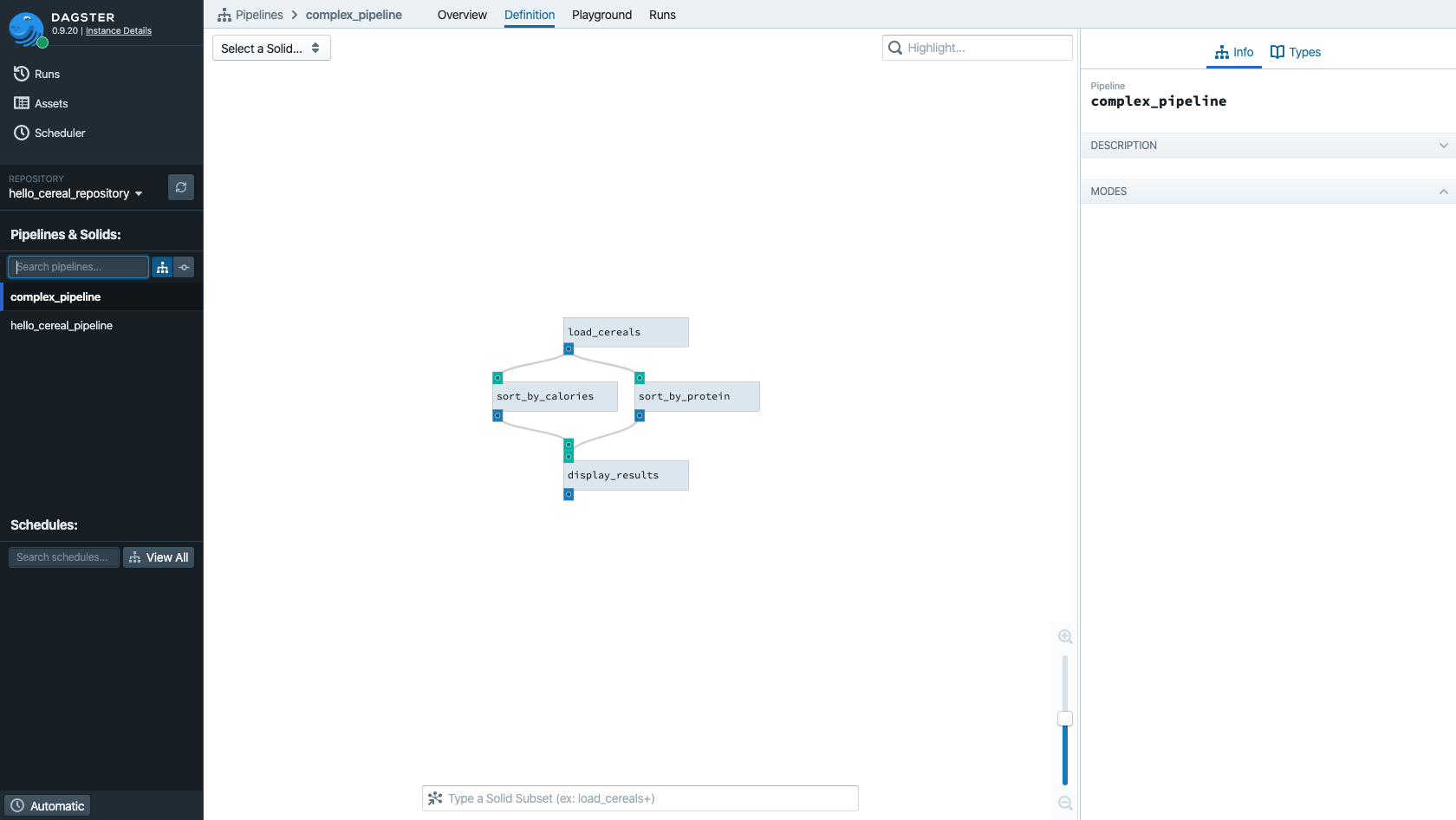

Repositories in Dagit#

If you save this file as repos.py, you can then run the command line tools on it. Try running:

dagit -f repos.py

Now you can see the list of all pipelines in the repo on the left, and you can search a pipeline by typing its name:

Workspaces#

Loading repositories via the -f or -m options is actually just a convenience function. The

underlying abstraction is the "workspace", which determines all of the available repositories

available to Dagit.

We have a file format for building workspaces called workspace.yaml. It is a convenience: it

prevents you from having to type the same -f or -m flag repeatedly. But as you'll see below it

enables more capabilities.

The following config will load pipelines from a file, just like the -f CLI option.

load_from:

- python_file: repos.py

Dagit will look for workspace.yaml in the current directory by default, so now you can launch

Dagit from that directory with no arguments.

dagit

Use the python_package config option to load pipelines from explicitly installed Python packages.

For example, you can pip install our tutorial code snippets as a Python package:

pip install -e docs_snippets # Run this in the `examples/` directory.

Then, you can configure your workspace.yaml to load pipelines from this package.

load_from:

- python_package: docs_snippets.intro_tutorial.advanced.repositories.repos

Supporting multiple repositories#

You can also use workspace.yaml to load multiple repositories. This can be useful for organization

purposes, in order to group pipelines and other artifacts by team.

load_from:

- python_package: docs_snippets.intro_tutorial.advanced.repositories.repos

- python_file: repos.py

Load it:

dagit -w multi_repo_workspace.yaml

And now you can switch between repositories in Dagit.

Multi-environment repositories#

Sometimes teams desire different Python versions or virtual environments. To support this, Dagster repositories each live in completely separate processes from each other, and tools like Dagit communicate with those repositories using a cross-process RPC protocol.

Via the workspace, you can configure a different executable_path for each of your repositories. For example:

load_from:

- python_file:

relative_path: "/path/to/team/pipelines.py"

executable_path: "/path/to/team/virtualenv/bin/python"

- python_package:

package_name: "other_team_package"

executable_path: "/path/to/other_/virtualenv/bin/python"

For this example to work, you need to change the executable paths to point a virtual environment available in your system, and point to a Python file or package that is available and loadable by that executable.